ChatSan Team

Published on April 11, 2026

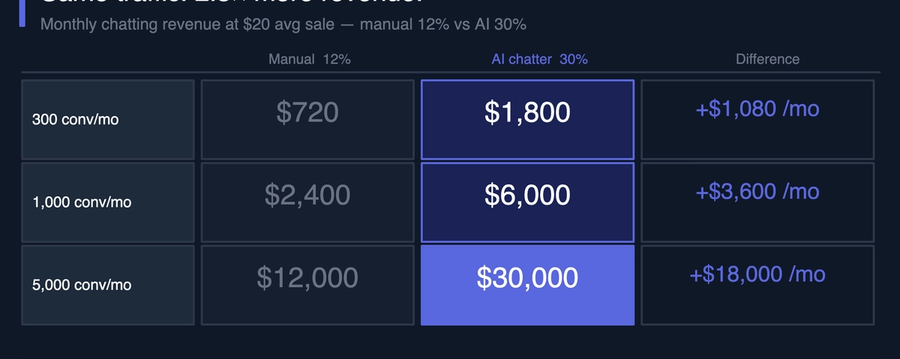

Your manual chatters convert at 12%. AI chatting tools target 30%. That gap is worth $1,080 a month at 300 conversations, and $4,800 at 1,000. Here's what drives it and how to measure it on your own operation.

ChatSan Team

Published on April 11, 2026

Your manual chatters are running at 12%. Every AI chatting tool you've seen claims 30%. That gap is real, and it's structural. It doesn't come from AI being better at conversation.

It comes from a funnel that filters non-buyers before the chatter opens a single message. That's the short answer.

In OFM chatting, conversion rate measures one thing: the percentage of new conversations that result in a sale.

The formula:

Conversion rate = number of sales ÷ number of new conversations × 100

If your agency handles 1,000 new Telegram conversations in a month and 120 result in a purchase, your conversion rate is 12%.

That's a different metric from revenue. Conversion rate measures how efficiently you turn conversations into buyers, not how much each buyer spends. An agency running a 30% rate on a low-LTV audience can generate less total revenue than one at 12% on a high-LTV audience.

Both numbers matter. Conversion rate is the metric most directly affected by the quality of the pre-sale process.

The 8-15% manual benchmark isn't a failure. It's a structural outcome.

Here's what most agencies get wrong when they look at this number: they attribute the gap to their chatters' skill. They hire better, they train harder, they build scripts.

The problem isn't the chatter. It's the structure they're working inside.

A human chatter starts every new conversation from zero. They don't know whether this lead buys.

They have to earn that information through warmup and rapport-building. On average, that discovery runs 20 to 40 minutes per lead.

If 80% of inbound leads won't buy regardless of conversation quality, 80% of chatter time goes toward producing that discovery and nothing else.

The conversion rate sits where it does because of two things: the buyer-to-non-buyer ratio in the inbound, and how efficiently the chatter identifies buyers. Manual chatters can't change the first factor.

Human capacity caps their ability to improve the second. The limiting factors: volume they can run at once, method consistency, focus on hour 7 of a shift.

That's what 8-15% represents. It's how well a skilled human performs against those constraints, at volume, reliably.

So why does a structured AI chatter consistently hit higher conversion? Is it just hype?

No. But precision matters here.

The 30% figure is an industry benchmark drawn from the same data that produces the 8-15% manual rate. It's not a ChatSan-specific verified figure. It reflects what a well-structured, consistently run pre-sale funnel produces on comparable inbound traffic.

Your results will vary depending on traffic quality, creator niche, and how the tool is configured.

The gap between 12% and 30% comes from three structural advantages that compound at volume.

1. Consistency at scale

A human chatter running 4 simultaneous conversations on hour 7 doesn't produce the same warmup as the same chatter on hour 2. Attention splits. The emotional register drops.

The relational phase shortens. The ask arrives before the lead is ready.

An AI chatter runs the same method on every conversation, at every hour, at any volume. The 200th conversation is identical to the first. Boring advantage. Consequential one.

2. No cold starts

Every conversation a chatter opens has already been through qualification. They don't have to discover the lead isn't a buyer. The funnel already filtered them. That changes everything about how chatter time gets spent.

3. Full phase discipline

A structured GFE funnel runs every phase in sequence, regardless of how the lead behaves or how many conversations run in parallel. A human chatter under volume pressure skips the relational phase.

They move too fast to the warmup. The emotional investment doesn't reach the level it needs to be when the ask arrives.

Full phase discipline on every conversation is what produces the benchmark figure. It's difficult to sustain manually at scale. It's the default behavior of a structured AI funnel.

The conversion rate gap only matters when it's attached to a real operation. Here's what the difference between 12% and 30% produces at three agency scales.

Assumptions across all scenarios: average sale value $20 (industry average LTV for PPV/content), calculated on total conversation volume.

| Conversations | 300 | 300 |

| Conversions | 36 | 90 |

| Revenue from chatting | $720 | $1,800 |

| Difference | +$1,080/month |

At 300 conversations per month, the conversion rate gap is worth $1,080/month ($12,960/year) on the same traffic, with no changes to acquisition.

| Conversations | 1,000 | 1,000 |

| Conversions | 120 | 300 |

| Revenue from chatting | $2,400 | $6,000 |

| Difference | +$3,600/month |

At 1,000 conversations per month, the gap is $3,600/month ($43,200/year).

| Conversations | 5,000 | 5,000 |

| Conversions | 600 | 1,500 |

| Revenue from chatting | $12,000 | $30,000 |

| Difference | +$18,000/month |

At 5,000 conversations per month, the conversion rate gap generates $18,000/month in additional revenue from the same inbound traffic. That's $216,000/year with no additional acquisition spend.

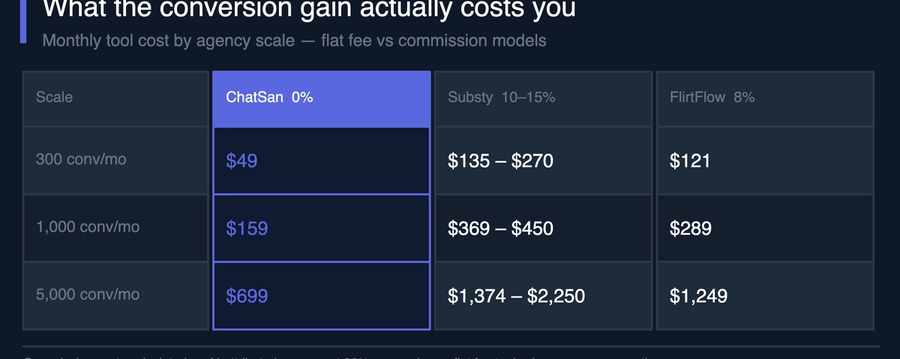

Revenue is one half of the equation. The cost to produce that conversion is the other.

The most common pricing model among AI chatting tools combines a base subscription with a commission on AI-generated sales. At scale, that commission compounds against the revenue gains.

Commission costs calculated on AI-attributed revenue at the conversion rates above. Flat fee tools charge for conversations processed; commission tools charge a percentage of every sale the AI produces.

At 1,000 conversations per month, a flat-fee AI chatter at 30% conversion generates $6,000 in chatting revenue. The cost: $159. The same agency on a commission-based tool generating the same rate pays $369–$450, before any commission that comes off every sale.

That's the real variable most agencies miss. The conversion rate improvement and the tool cost aren't independent. Which tool you choose determines how much of the conversion gain you actually keep.

Supercreator claims "90-95% conversation activity rate." That sounds better than 30% conversion. It isn't the same metric.

Activity rate measures whether a conversation got a reply. If a lead sends one message and the AI responds, that's an active conversation. It says nothing about whether that lead bought anything.

Conversion rate measures whether a conversation ended in a sale. That's the metric that connects to revenue.

A tool can produce 95% activity and 8% conversion simultaneously. The lead engaged. They didn't buy. The activity rate tells you your inbox is busy. The conversion rate tells you whether that inbox activity is worth running.

The 30% conversion benchmark ChatSan targets refers to purchase conversion, not message activity. When evaluating any AI chatting tool, ask which metric they're quoting. The answer tells you a lot about what the tool is actually optimized to produce.

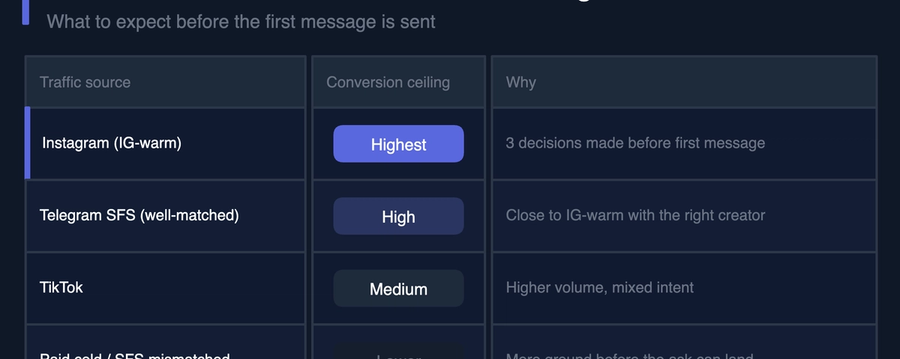

The conversion rate an AI chatter can achieve is partly set before the first message is sent. Lead source determines baseline intent.

Instagram-to-Telegram funnels consistently produce the warmest inbound. A lead who clicked through a Reel, found the DM link in the bio, and initiated contact has already made several decisions before the AI chatter sees them. Baseline conversion rates on this traffic run higher.

Paid cold traffic brings higher volume but lower intent. The lead saw an ad, not a creator they were already interested in. The funnel has more work to do to build trust before the ask lands.

Organic Telegram group traffic varies by niche alignment. A lead from a well-matched SFS collab performs close to IG-warm. A lead from a mismatched promotion doesn't.

Traffic source is the one variable the AI chatter can't fix. If the inbound pool is low-intent, the conversion rate ceiling drops. For the full breakdown of how to filter pre-sale check out The Real Reason OFM Agencies Lose Leads on Telegram.

Not every AI chatter that claims 30% actually produces it. The conversion rate is a function of how the tool structures the pre-sale conversation, not just the fact that it uses AI.

Phase structure. The benchmark figure comes from a complete pre-sale arc, not from individually good replies. A tool without phase structure generates responses. It doesn't build toward anything, so the emotional state at hand-off is whatever it happens to be.

Lead scoring. A tool that scores leads at hand-off and sends that score to the chatter changes how they allocate their time. Higher scores get priority. The human close is more precise when the chatter knows, before they open the conversation, what this lead is worth.

Persona fidelity. An AI chatter that holds consistent creator personality across a 50-message conversation finds higher engagement than one that drifts or sounds generic. Leads disengage when the character breaks.

Humanizer features. Typing simulation and message delays cut bot detection. Emoji reactions, timing variance, the basic texture of a real conversation. A lead who suspects they're talking to a bot disengages before the funnel can complete.

None of these are magic. They're the conditions under which a structured GFE funnel produces its designed output. A tool that skips any of them will see conversion rates below the benchmark. Not because AI chatting doesn't work, but because the tool isn't running the full method.

My read on the conversion rate gap: it's not primarily a technology story. It's an operations story. The agencies that close the gap aren't working better traffic or producing better content. They're running a structured pre-sale funnel on every new conversation, consistently, at volume.

What that looks like for your chatters: they spend their shifts closing warm, scored leads instead of finding out which conversations are worth their time. The warmup phase moves off their plate entirely. The hand-off arrives with a buyer score already attached.

The revenue math at every scale makes the case. At 1,000 conversations per month, the difference between 12% and 30% is $3,600/month in additional revenue from the same inbound. At $159 flat, the cost to produce it leaves $3,441 net before accounting for any reduction in chatter hours.

Actually, "improvement" is the wrong word. It's a different operation.

Is 30% guaranteed?

The 30% figure is an industry benchmark for well-structured AI pre-sale funnels on warm inbound traffic, not a guaranteed floor. Agencies running paid cold traffic or mismatched SFS promos will see lower numbers. Agencies with IG-warm inbound and a complete persona configured often hit or exceed it. The benchmark is real, but it requires the right inputs to produce.

What's the difference between activity rate and conversion rate?

Activity rate is whether a conversation got a reply. Conversion rate is whether that conversation ended in a purchase. A tool can hit 95% activity and 8% conversion simultaneously. When evaluating any AI chatting tool, ask which metric they're quoting. Some tools optimize for activity because it's easy to inflate. Conversion rate is what actually drives revenue.

How do I calculate my conversion rate correctly?

The 30% benchmark is calculated across all new inbound conversations, including leads who went quiet in phase 2, bounced after the first message, or never replied again. It's not filtered to conversations that ran the full arc. If you measure only completed-funnel conversations, your rate will look higher. Use total new conversations as the denominator to get a number you can actually compare across methods.

Calculate your additional revenue with ChatSan

Most OFM chatters open a scored lead and start from scratch. The lead feels the shift and ghosts. Here's the one step that determines whether a warmed handoff actually closes.

A new Telegram account jumping from 5 DMs a day to 80 in a week looks identical to a spam bot. That's how bans happen. Here's the warmup schedule and profile setup that prevent it, before the traffic arrives.

Most agencies running AI chatters are building a reply bot and calling it a GFE strategy. The ones converting at 30% are doing something structurally different. Here's the gap.